SEO site analysis for duplicates: how to parse sitemap.xml identifies hidden problems

Content

- Introduction

- What is it sitemap.xml and why is it needed

- What duplicates are found on websites

- Problems with hreflang on multilingual websites

- Broken pages in the site map

- How sitemap parsing helps you find problems

- Typical reasons for duplicates

- Practical SEO Audit Checklist

- Conclusion

- Full listing of the audit team

Introduction

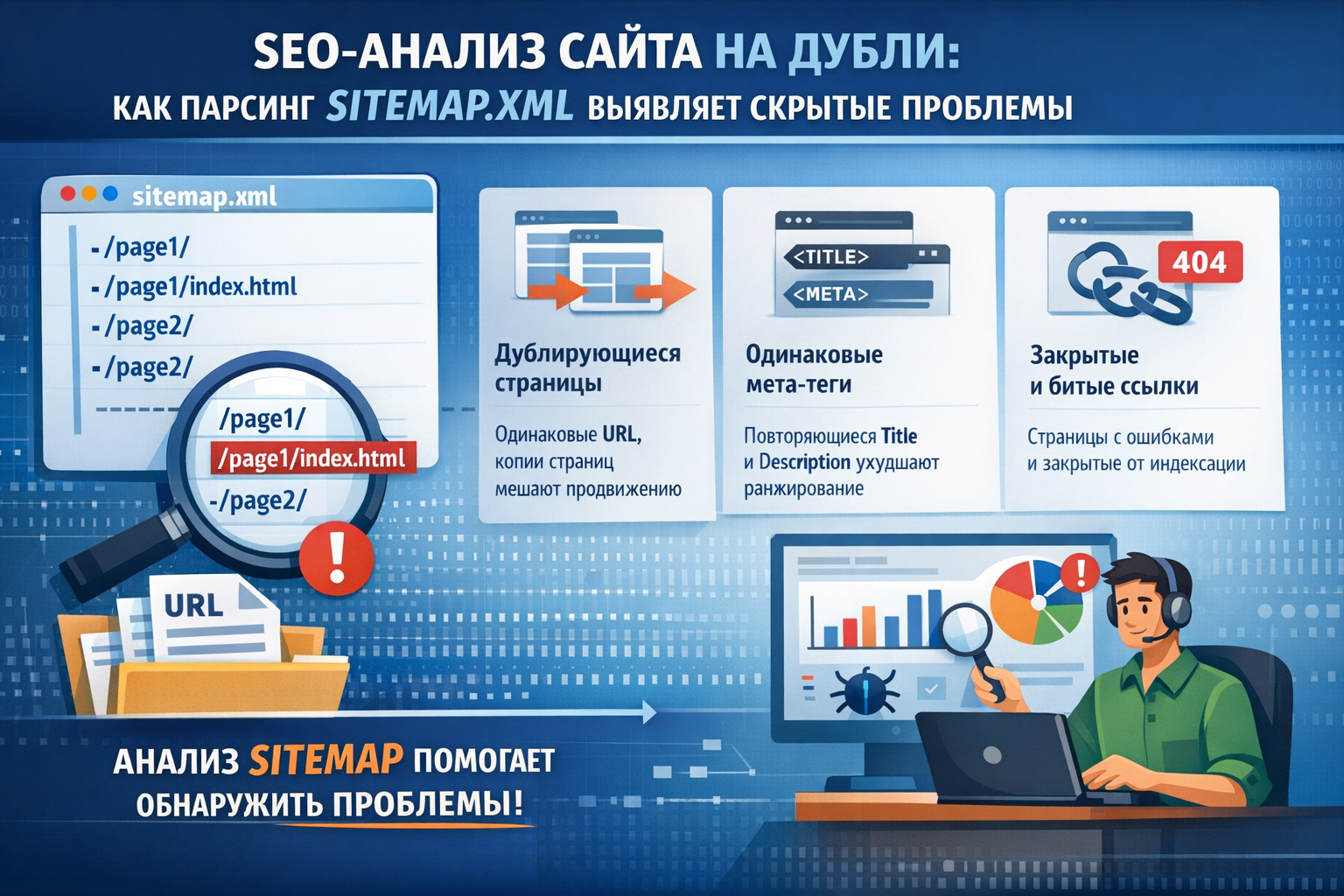

Duplicates on a website are one of the most insidious SEO problems. They do not cause errors in the browser, do not break the layout and do not interfere with users. But for search engines, duplicate content is a serious signal of poor resource quality. Google may lower the position of the site, if you find the same titles, descriptions, URLS or repetitive in its structure.

sitemap.xmlautomatically

What is it sitemap.xml and why is it needed

lastmodchangefreqpriority

Search robots use this file as a map to crawl the site. If the page is in the sitemap, the robot will visit it first. If there is no page, it may be indexed with a delay or not indexed at all.

That is why the quality of the site map directly affects SEO indicators.:

- Indexing speed

- Completeness of coverage

- A signal of trust

But if the map contains errors — duplicate URLs, broken links, incorrect annotations — the effect is reversed. The robot spends its "crawling budget" crawling the same pages, and the site receives penalty signals.

What duplicates are found on websites

When auditing a sitemap, there are three main types of duplicates that need to be checked. Each of them affects SEO in different ways, but they all reduce the effectiveness of indexing.

Duplicates of the URL in the site map itself

/services/contacts

The search robot will process both occurrences, but it will spend twice as much resources. On large sites with thousands of pages, this leads to a significant loss of the crowding budget.

<title>

<title><title>

A typical example: by default, CMS substitutes the site name in all headings, and the developer forgets to set a unique title for each page. As a result, dozens of pages receive a title like "Company name — Home".

<meta description>

click-through rate (CTR)

A common situation is that the description is set by default at the template level, and if the author has not filled in the field manually, the page receives a general description of the site. During the audit, such duplicates are easy to detect — several pages will have the same description value.

Problems with hreflang on multilingual websites

hreflang

The three most common problems with hreflang are:

- No backlink.I must

- Lack of self-reference.

- A link to a non-existent page.

It is almost impossible to detect these problems manually on a site with hundreds of pages. Automatic sitemap parsing with hreflang link verification is the only reliable way to keep a multilingual website in good condition.

Broken pages in the site map

200 OK

When the search robot finds a link to a non-existent page in the site map, it interprets this as a sign of poor quality of service for the resource. Single errors will not lead to disaster, but the systematic presence of broken links in the sitemap reduces the trust of the search engine in the site as a whole.

Checking the HTTP status of all URLs from the site map allows you to:

- Identify deleted pages that you forgot to remove from the sitemap

- Detect "silent" server errors that are not visible to ordinary visitors

- Find redirection chains that slow down indexing

How sitemap parsing helps you find problems

Manual site map verification is ineffective even for small projects. A website with a blog, a catalog of services and a portfolio easily reaches 100-200 pages, and with a multilingual version — twice as many. Automatic parsing solves this problem in seconds.

The algorithm of auditing through parsing sitemap.xml It includes several stages:

- Loading and parsing XML.

- Checking for duplicate URLs.

- hreflang validation.

- Page crawling.

- Extraction of meta data.

- Grouping and searching for duplicates.

The result of the parsing is a structured report that shows the exact number of issues in each category and specific URLs that require attention.

Typical reasons for duplicates

Understanding the causes helps not only to correct, but also to prevent duplicates. Here are the most common situations:

- Mixing static and dynamic data.

- Template meta tags.

- Massive database updates.

- Incorrect hreflang generation.

- Forgotten deleted pages.

Practical SEO Audit Checklist

once a weekonce a month

What to check during each audit:

- URL Duplicates

- HTTP statuses

- Uniqueness of <title>

- Uniqueness of the <meta description>

- The correctness of hreflang

- Relevance of lastmod

- Completeness of the map

Automating these checks is the optimal solution. A script running on a schedule will detect the problem on the same day it appeared, rather than a month later during manual verification.

Conclusion

system process

Automatic site map parsing solves three key tasks:

- Speed

- Completeness

- Regularity

Investing in SEO audit automation pays off multiple times: you spend time setting it up once, and the system works for you all the time, protecting the site's position and ensuring high-quality indexing of all pages.

Full listing of the Laravel sitemap audit team

seo:audit-sitemap

<?php

namespace App\Console\Commands;

use Illuminate\Console\Command;

use Illuminate\Http\Client\Pool;

use Illuminate\Http\Client\Response;

use Illuminate\Support\Collection;

use Illuminate\Support\Facades\Http;

class AuditSitemap extends Command

{

protected $signature = 'seo:audit-sitemap

{url : URL of the sitemap.xml to parse}

{--timeout=15 : HTTP request timeout in seconds per page}

{--concurrency=5 : Max concurrent HTTP requests when fetching pages}';

protected $description = 'Parse sitemap.xml and detect duplicate URLs, <title>, <meta description>, broken pages and hreflang issues';

private const XHTML_NS = 'http://www.w3.org/1999/xhtml';

private Collection $brokenPages;

public function handle(): int

{

$this->brokenPages = collect();

$sitemapUrl = $this->argument('url');

$timeout = (int) $this->option('timeout');

$this->info("Fetching sitemap: {$sitemapUrl}");

$entries = $this->parseSitemap($sitemapUrl);

if ($entries === null) {

$this->error('Failed to fetch or parse the sitemap.');

return self::FAILURE;

}

$locs = $entries->pluck('loc');

$this->info("Found {$locs->count()} URL entries in the sitemap.");

$this->newLine();

$duplicateUrls = $locs->countBy()->filter(fn (int $count) => $count > 1);

$this->reportDuplicateUrls($duplicateUrls);

$hreflangIssues = $this->validateHreflang($entries);

$this->reportHreflangIssues($hreflangIssues);

$uniqueUrls = $locs->unique()->values();

$this->info("Fetching {$uniqueUrls->count()} unique pages...");

$pages = $this->fetchPages($uniqueUrls, $timeout);

$this->newLine();

$this->reportBrokenPages();

$duplicateTitles = $this->findDuplicates($pages, 'title');

$this->reportDuplicates($duplicateTitles, 'title');

$duplicateDescriptions = $this->findDuplicates($pages, 'description');

$this->reportDuplicates($duplicateDescriptions, 'meta description');

$this->printSummary($duplicateUrls, $duplicateTitles, $duplicateDescriptions, $hreflangIssues);

$hasIssues = $duplicateUrls->isNotEmpty()

|| $duplicateTitles->isNotEmpty()

|| $duplicateDescriptions->isNotEmpty()

|| $this->brokenPages->isNotEmpty()

|| $hreflangIssues->isNotEmpty();

return $hasIssues ? self::FAILURE : self::SUCCESS;

}

private function parseSitemap(string $url): ?Collection

{

try {

$response = Http::timeout(15)->get($url);

} catch (\Throwable $e) {

$this->error("HTTP error: {$e->getMessage()}");

return null;

}

if ($response->failed()) {

return null;

}

$previousUseErrors = libxml_use_internal_errors(true);

$xml = simplexml_load_string($response->body());

libxml_use_internal_errors($previousUseErrors);

if ($xml === false) {

return null;

}

$nodes = [];

foreach ($xml->url as $node) {

$nodes[] = $node;

}

return collect($nodes)

->map(fn (\SimpleXMLElement $node) => [

'loc' => trim((string) $node->loc),

'hreflang' => $this->parseHreflangLinks($node),

])

->filter(fn (array $entry) => $entry['loc'] !== '')

->values();

}

private function parseHreflangLinks(\SimpleXMLElement $node): array

{

$links = [];

foreach ($node->children(self::XHTML_NS)->link as $link) {

$links[] = $link;

}

return collect($links)

->mapWithKeys(function (\SimpleXMLElement $link) {

$attrs = $link->attributes();

return [(string) ($attrs['hreflang'] ?? '') => trim((string) ($attrs['href'] ?? ''))];

})

->filter(fn (string $href, string $lang) => $lang !== '' && $href !== '')

->all();

}

private function validateHreflang(Collection $entries): Collection

{

$locSet = $entries->pluck('loc')->flip();

$hreflangMap = $entries

->filter(fn (array $e) => ! empty($e['hreflang']))

->pluck('hreflang', 'loc');

return $hreflangMap

->flatMap(fn (array $alternates, string $loc) => $this->checkAlternates($loc, $alternates, $locSet, $hreflangMap))

->values();

}

private function checkAlternates(string $loc, array $alternates, Collection $locSet, Collection $hreflangMap): array

{

$issues = collect($alternates)

->flatMap(fn (string $href, string $lang) => $this->checkSingleAlternate($loc, $lang, $href, $locSet, $hreflangMap))

->all();

$hasSelfRef = in_array($loc, $alternates, true);

if (! $hasSelfRef) {

$issues[] = [

'type' => 'missing_self_ref',

'loc' => $loc,

'detail' => 'Missing self-referencing hreflang annotation',

];

}

return $issues;

}

private function checkSingleAlternate(string $loc, string $lang, string $href, Collection $locSet, Collection $hreflangMap): array

{

$issues = [];

if (! $locSet->has($href)) {

$issues[] = [

'type' => 'missing_in_sitemap',

'loc' => $loc,

'detail' => "hreflang=\"{$lang}\" points to {$href} which is NOT in the sitemap",

];

return $issues;

}

if ($href === $loc) {

return $issues;

}

if (! $hreflangMap->has($href)) {

$issues[] = [

'type' => 'missing_reciprocal',

'loc' => $loc,

'detail' => "hreflang=\"{$lang}\" points to {$href}, but that page has no hreflang annotations",

];

return $issues;

}

if (! in_array($loc, $hreflangMap->get($href), true)) {

$issues[] = [

'type' => 'missing_reciprocal',

'loc' => $loc,

'detail' => "hreflang=\"{$lang}\" points to {$href}, but that page does not link back",

];

}

return $issues;

}

private function fetchPages(Collection $urls, int $timeout): Collection

{

$concurrency = max(1, (int) $this->option('concurrency'));

$bar = $this->output->createProgressBar($urls->count());

$bar->start();

$pages = $urls->chunk($concurrency)

->flatMap(function (Collection $chunk) use ($timeout, $bar) {

$chunkUrls = $chunk->values()->all();

$responses = Http::pool(fn (Pool $pool) => collect($chunkUrls)

->each(fn (string $pageUrl) => $pool->as($pageUrl)->timeout($timeout)->get($pageUrl))

);

return collect($chunkUrls)

->mapWithKeys(function (string $pageUrl) use ($responses, $bar) {

$bar->advance();

return [$pageUrl => $this->processResponse($pageUrl, $responses[$pageUrl] ?? null)];

})

->filter();

});

$bar->finish();

$this->newLine();

return $pages;

}

private function processResponse(string $url, mixed $response): ?array

{

if ($response === null || $response instanceof \Throwable) {

$this->brokenPages[$url] = $response instanceof \Throwable ? $response->getMessage() : 'No response';

return null;

}

if ($response->status() !== 200) {

$this->brokenPages[$url] = $response->status();

}

if ($response->failed()) {

return null;

}

$html = $response->body();

return [

'title' => $this->extractTitle($html),

'description' => $this->extractMetaDescription($html),

];

}

private function extractTitle(string $html): ?string

{

if (! preg_match('/<title[^>]*>(.*?)<\/title>/si', $html, $matches)) {

return null;

}

$title = trim(html_entity_decode($matches[1], ENT_QUOTES | ENT_HTML5, 'UTF-8'));

return $title !== '' ? $title : null;

}

private function extractMetaDescription(string $html): ?string

{

$patterns = [

'/<meta\s[^>]*name\s*=\s*["\']description["\']\s[^>]*content\s*=\s*["\']([^"\']*?)["\']/si',

'/<meta\s[^>]*content\s*=\s*["\']([^"\']*?)["\']\s[^>]*name\s*=\s*["\']description["\']/si',

];

foreach ($patterns as $pattern) {

if (preg_match($pattern, $html, $m)) {

$desc = trim(html_entity_decode($m[1], ENT_QUOTES | ENT_HTML5, 'UTF-8'));

return $desc !== '' ? $desc : null;

}

}

return null;

}

private function findDuplicates(Collection $pages, string $field): Collection

{

return $pages

->filter(fn (array $meta) => ($meta[$field] ?? null) !== null)

->groupBy(fn (array $meta) => $meta[$field], preserveKeys: true)

->filter(fn (Collection $group) => $group->count() > 1)

->map(fn (Collection $group) => $group->keys());

}

private function reportBrokenPages(): void

{

if ($this->brokenPages->isEmpty()) {

$this->info('All pages returned HTTP 200.');

$this->newLine();

return;

}

$this->error("Found {$this->brokenPages->count()} page(s) with non-200 HTTP status:");

$rows = $this->brokenPages->map(fn ($status, $url) => [$url, $status])->values()->all();

$this->table(['URL', 'Status'], $rows);

$this->newLine();

}

private function reportHreflangIssues(Collection $issues): void

{

if ($issues->isEmpty()) {

$this->info('All hreflang annotations are valid.');

$this->newLine();

return;

}

$labels = [

'missing_in_sitemap' => 'Alternate URL not found in sitemap',

'missing_reciprocal' => 'Missing reciprocal hreflang link',

'missing_self_ref' => 'Missing self-referencing hreflang',

];

$this->error("Found {$issues->count()} hreflang issue(s):");

$issues->groupBy('type')->each(function (Collection $group, string $type) use ($labels) {

$this->newLine();

$this->warn(' '.($labels[$type] ?? $type).':');

$group->each(function (array $issue) {

$this->line(" {$issue['loc']}");

$this->line(" {$issue['detail']}");

});

});

$this->newLine();

}

private function reportDuplicateUrls(Collection $duplicates): void

{

if ($duplicates->isEmpty()) {

$this->info('No duplicate URLs found in the sitemap.');

$this->newLine();

return;

}

$this->error("Found {$duplicates->count()} duplicate URL(s) in the sitemap:");

$rows = $duplicates->map(fn (int $count, string $url) => [$url, $count])->values()->all();

$this->table(['URL', 'Occurrences'], $rows);

$this->newLine();

}

private function reportDuplicates(Collection $duplicates, string $label): void

{

if ($duplicates->isEmpty()) {

$this->info("No duplicate <{$label}> values found.");

$this->newLine();

return;

}

$this->error("Found {$duplicates->count()} duplicate <{$label}> value(s):");

$duplicates->each(function (Collection $urls, string $value) {

$truncated = mb_strlen($value) > 80 ? mb_substr($value, 0, 80) : $value;

$this->warn(" \"{$truncated}\":");

$urls->each(fn (string $url) => $this->line(" {$url}"));

});

$this->newLine();

}

private function printSummary(

Collection $duplicateUrls,

Collection $duplicateTitles,

Collection $duplicateDescriptions,

Collection $hreflangIssues,

): void {

$this->newLine();

$this->info('Audit Summary');

$summaryLine = fn (string $label, Collection $items) => sprintf(

' %-30s %d',

$label,

$items->count(),

);

$this->line($summaryLine('Non-200 pages:', $this->brokenPages));

$this->line($summaryLine('Duplicate URLs:', $duplicateUrls));

$this->line($summaryLine('Hreflang issues:', $hreflangIssues));

$this->line($summaryLine('Duplicate title:', $duplicateTitles));

$this->line($summaryLine('Duplicate meta description:', $duplicateDescriptions));

}

}